In a previous post, I attempted to estimate how much pretraining data human brains actually get when you think about it carefully, to make the main point that humans don’t really learn most concepts from as “little” data as is typically assumed.

The secondary point was then a comparison, to show that human brains over a lifetime get a lot more pretraining data (measured in bits) than a text-only pretrained LLM like Llama 3 to learn (potentially) the same concept.

But clearly, text-only LLMs aren’t the only LLMs out there now. Multimodal pretrained models exist, and one very reasonable thought you might have after reading that post might sound like:

“Well, human brains don’t just process information from eyes and ears to acquire language. A text-only LLM has it so easy. They don’t need to first figure out how to use “eyes” to see edges and lines and things, or “ears” to pick out the start and end of phonemes before figuring out language on top of that. They get text as raw input into their brain. Us humans also have to do a lot of other things in tandem, like figuring out how to move our limbs to draw pictures or pick up cups of tea. Surely it makes sense that we use a lot more training data than a text-only LLM right?”

I agree.

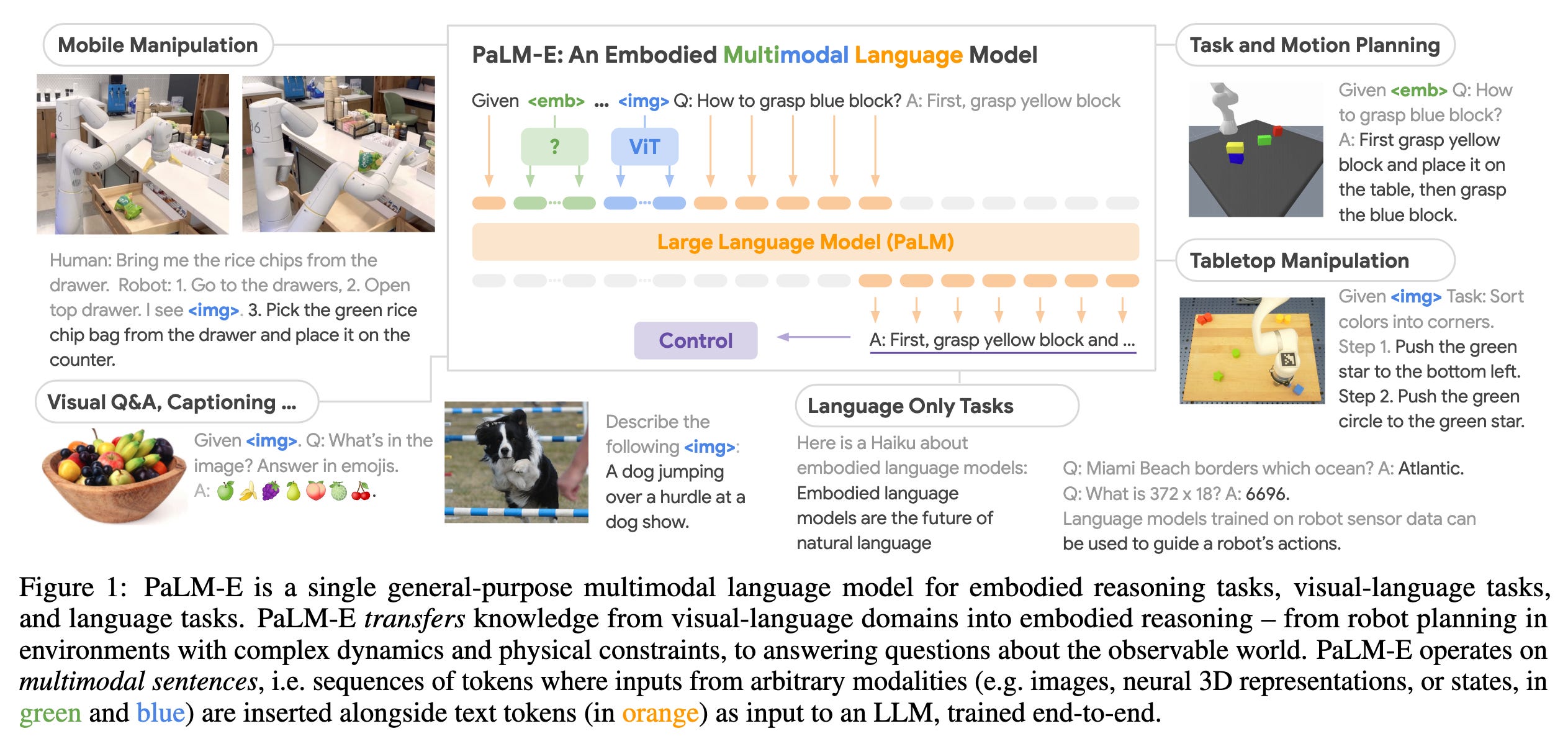

So let’s look at Google’s RT-2, a model that has vision, language, and action capabilities then. It still operates on roughly the same principles as a text-only LLM with tokens and all. It’s just that RT-2 now has multimodal “sentences”, and a token can be an image token, a text token, or an action token.

But first…

What’s an image token?

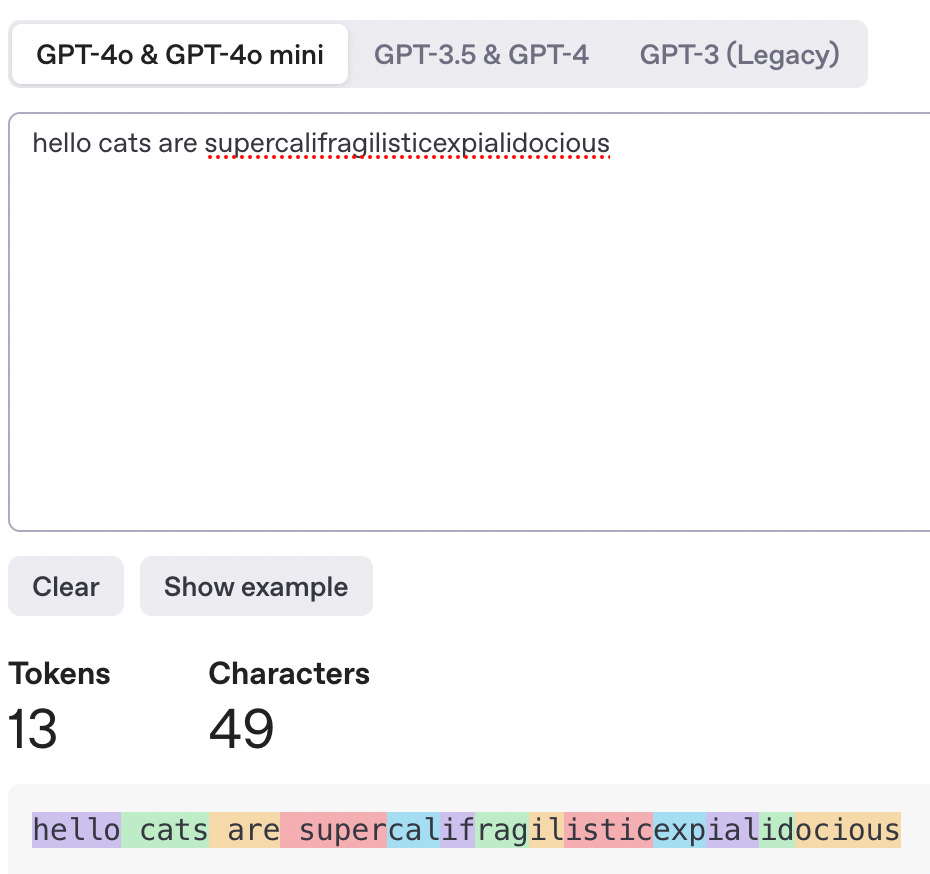

An image token is to an image, what a text token is to a text-only sentence. Remember, text tokens look like this:

For images, you basically cut up an image into smaller “patches”.

Each patch, like the larger image, is just a bunch pixels put together, where each pixel has an [Red, Green, Blue] numeric value.

So, you can “flatten” a 3D image patch into a 1D sequence/sentence by listing out the R then G then B values in a sequence. People usually do a few more things to these patches for training efficiency’s sake, but that’s the basic idea. This is one of those things where it’s easier to understand if you visualize it in motion, so here’s a good explanation video for those interested.

These flattened image patches are your “image tokens”, and are the equivalent of the text-based word fragments in a regular text-only LLM. Oh, and usually, people add an extra token to multimodal sentences which tells the model whether a particular input token is an <image>, <text>, or <action> token, so that the model can later learn to output image tokens, text tokens, or action tokens. Which is how it “decides” to respond with text or an action or something else. (In case you ever wondered how “fully unified” multimodal models “decide” which modality to output, and how those were different to the ones that use text-only LLMs to generate text prompts which are then fed into image generation models like DALL-E).

What’s an “action” token?

This depend on what actions are available to you. If you have a simple joystick that can go <up>, <down>, <left>, <right>, and maybe push 2 buttons, <A> and <B>, you have 6 actions, so each of those actions can be encoded as is, into an action token.

If your robot arm has more actions available, like maybe it’s got extra dimensions and can move to an X, Y, and Z coordinate, and also <open_hand>, then you simply map those extra dimensions onto some number of bins of your choosing, and discretize across the dimensions. The exact details of how don’t matter here. The important thing is that in the end, you end up with discrete action tokens, which tell a controller or robot arm thing to do some action it is capable of executing.

So now we understand image and action tokens.

If you throw everything into a single transformer, you get “multimodal sentences”, which was nicely visualized in Figure 1 of the Palm-E paper, one of the earlier vision-language-action models from Google.

Which means theoretically, we could now do the same thing as before — figure out how many tokens RT-2 uses, then how many bits each type of token takes, then multiply to count up the number of training bits it took to create something like an RT-2. Then we could see how many orders of magnitude more or less data it takes to train something that’s more similar to humans.

Sadly, life is not that easy.

In the Datasets section of the RT-2 paper they say:

The vision-language datasets are based on the dataset mixtures from Chen et al. (2023b) and Driess et al. (2023). The bulk of this data consists of the WebLI dataset, which is around 10B image-text pairs across 109 languages, filtered to the top 10% scoring cross-modal similarity examples to give 1B training examples. Many other captioning and vision question answering datasets are included as well, and more info on the dataset mixtures can be found in Chen et al. (2023b) for RT-2-PaLI-X, and Driess et al. (2023) for RT-2-PaLM-E. When co-fine-tuning RT-2-PaLI-X, we do not use the Episodic WebLI dataset described by Chen et al. (2023a).

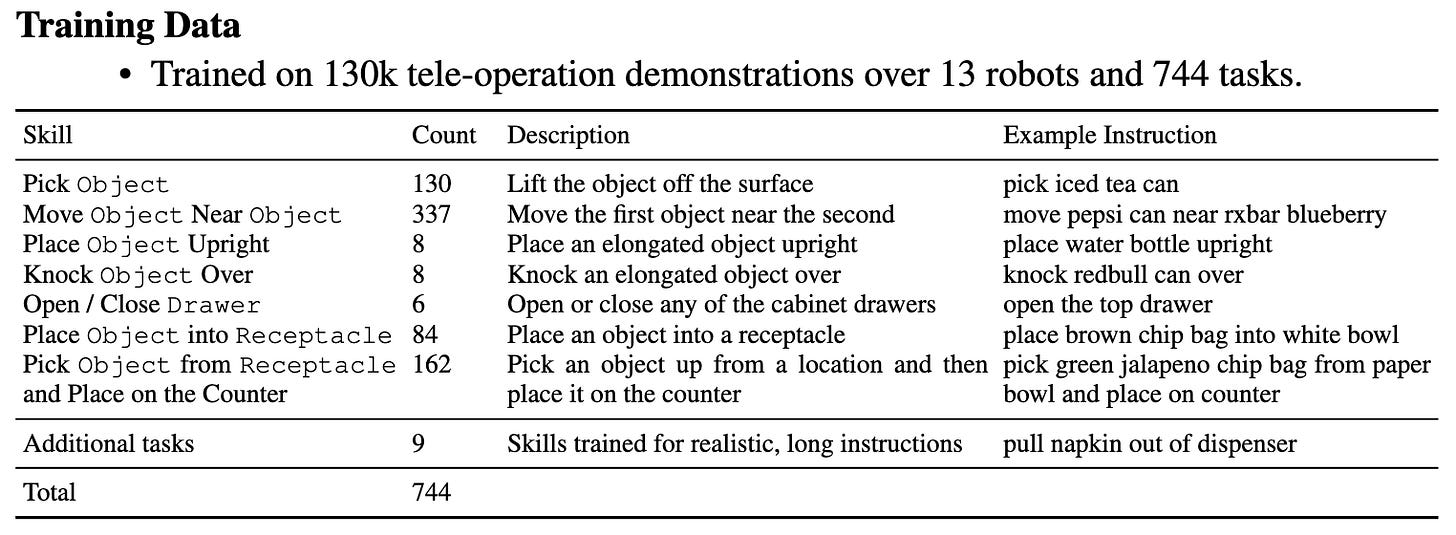

The robotics dataset is based on the dataset from Brohan et al. (2022). This consists of demonstration episodes collected with a mobile manipulation robot. Each demonstration is annotated with a natural language instruction from one of seven skills: "Pick Object", "Move Object Near Object", "Place Object Upright", "Knock Object Over", "Open Drawer", "Close Drawer", "Place Object into Receptacle", and "Pick Object from Receptacle and place on the counter". Further details can be found in Brohan et al. (2022).

What is 1 billion image-text pairs in gigabytes?

I took a peek at Chen et al. (2023b) and Driess et al. (2023), but honestly, I could not find a straight forward number, because those papers link to even more papers, and I just wanted to do a quick estimate here. 🥲

I did also look at Brohan et al. (2022), which is the RT-1 paper, just to see if I could find a quick number, but alas, I am unsure what 130k teleoperation demonstrations are in bits. I’m sure it’s possible to pull out the relevant numbers somehow…but for now, it’s beyond me. If someone comes across this post and can do the estimation, please do drop a comment!

I also tried to look at something more open-source, like OpenVLA to check if I could maybe find a more straight-forward number. Maybe the opacity from the Google work is just because I don’t have enough expertise in vision and language and robotics on the machine side of brains yet. (I spent my millions of waking seconds so far on learning the human side of brains. One day, I’ll get there. But that day is not today.)

From the OpenVLA paper:

In this work, we build on the Prismatic-7B VLM [44]. Prismatic follows the same standard architecture described above, with a 600M-parameter visual encoder, a small 2-layer MLP projector, and a 7B-parameter Llama 2 language model backbone [10]. Notably, Prismatic uses a two-part visual encoder, consisting of pretrained SigLIP [79 ] and DinoV2 [25] models. Input image patches are passed separately through both encoders and the resulting feature vectors are concatenated channel-wise. In contrast to the more commonly used vision encoders such as CLIP- [80] or SigLIP-only encoders, the addition of DinoV2 features has been shown to be helpful for improved spatial reasoning [44], which can be particularly helpful for robot control.

SigLIP, DinoV2, and Llama 2 do not release details about their training data, which likely consists of trillions of tokens of Internet-sourced image-text, image-only, and text-only data respectively. The Prismatic VLM is fine-tuned on top of these components using the LLaVA 1.5 data mixture [ 43 ], which contains a total of approximately 1M image-text and text-only data samples from open-source datasets [29, 42, 81–83].

Argh.

What about audio?

Oh yeah, audio multimodal models also exist. VALOR is one example of an open source vision-audio-language pretrained model.

But was there any straightforward description of the size of their pretraining data?

Nope.

The data that is downloadable in the linked github amounts to roughly ~40GB, but the bulk of their pretraining data is from YouTube videos, and I can’t easily figure out how many GBs of video they extracted.

A human-shaped robot

In any case, this is an addendum because I wasn’t motivated to put in too much effort into tracking down the numbers to estimate the pretraining dataset for the little vision-language-action models with robot arms or the vision-audio-language model (though I still wanted to acknowledge the very reasonable thought).

I wasn’t motivated to do this because whatever number I get, I know the next objection would be something along the lines of:

“Oh, those models are still a lot simpler than humans. Even if you added things together to estimate the pretraining data for a vision-audio-language-action model with one tiny robot arm…well, us humans have two arms. And legs too. And a whole torso. And mouths! This matters because what actions you can take affects the amount and type of concepts and language you can learn because you create words to describe what you can do, and words to express what you can’t do in relation to the things you can do.”

Which is a very fair objection.

But also…it’s only a matter of time before someone open sourced a humanoid robot.

So here we go.

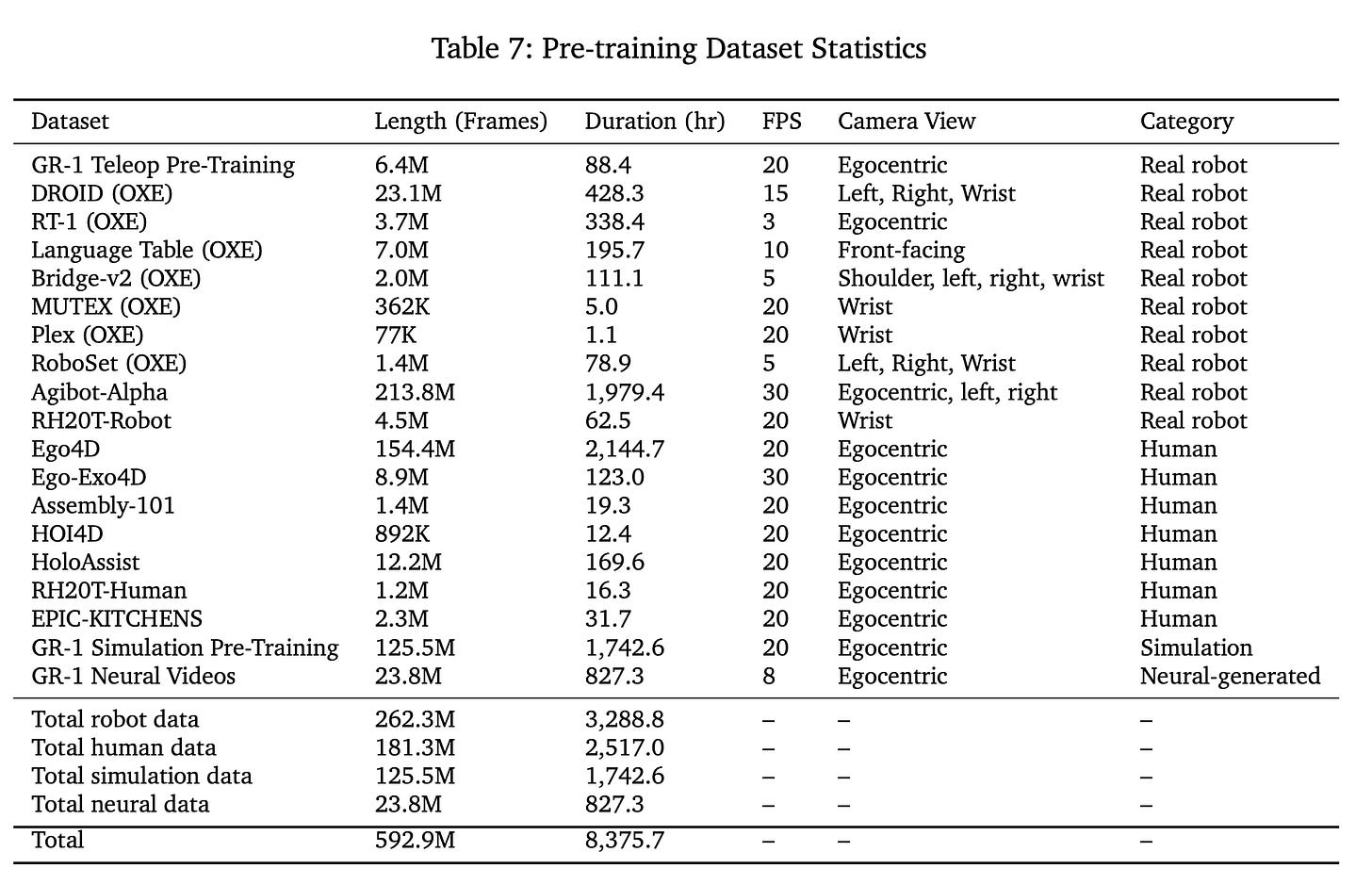

Presenting NVIDIA’s Isaac GR00T N1.

GR001 N1 doesn’t output text tokens and only outputs action tokens unlike other models (e.g., the ones mentioned above) but frankly…that’s clearly just a choice at this point (albeit probably a nontrivial economic choice). We know it’s doable, and the space of outputs doesn’t materially affect thinking about the size of a pretraining dataset anyways.

They did provide a nice table of their pre-training data at the literal end of the paper. But heck if I know how to estimate how many GBs “10 years of training in 2hrs in simulation time” equates to. It sounds like a lot though.

Maybe when we keep piling on individual capabilities, the final vision-audio-language-action AI does end up being equivalent to our human hundreds of quadrillions of pre-training bits at some point.

(1 petabit = 1 quadrillion bits.)

Aside: Remembering other biological brains exist

You may also be wondering if we could estimate the pretraining data being processed by other animals with biological brains to see if the artificial neural network/simulated versions of those animals required roughly the same size pretraining data. You absolutely could.

Here's a virtual rat from Harvard ft. DeepMind (paper) and a virtual fly in case someone wants to try that exercise. I’m not motivated enough to do this with rats and flies, but drop me a comment on the results if you do!

In the meantime, I’ll be here waiting for a virtual cat.

Remembering variation in the human experience

Though, I don’t really think we have to wait for LLMs to become “as embodied as humans” to make sensible estimates, because the range of human experience is varied. When we’re talking about AI embodied in machines, there’s no real reason to just limit things to the typical range of human capabilities when estimating how much data it takes for us to learn concepts.

For example take the concept of colour, at all levels of understanding, from the qualitative perceptual level to the higher abstracted concept.

The modal human is trichromat and has 3 photoreceptors in the eye, so the modal human sees in roughly 3 dimensional RGB.

Some humans are completely colourblind and only see in 1 dimensional greyscale. Some are slightly colourblind dichromats and see in 2 dimensional colour (usually missing the red-green dimension). There may even be a small population of humans who are tetrachromats who see in 4 dimensional colour1.

Also, some humans are even completely blind from birth. What does the human brain do with all its neurons that don’t get visual input then?

Does it make sense to subtract all the visual bits that would’ve been coming in through their eyes to estimate how much pretraining data a blind person’s brain gets when learning about the concept of colour?

I think it doesn’t.

It’s clear that a person blind from birth can still acquire the language of what is typically thought of as “visual” concepts like “colour”, even if it isn’t attached to any subjective experience or memories of colour. In the same way, a text-only ChatGPT using the words “orange” or “blue” does not mean ChatGPT perceives visual colour, but it’s also not just randomly spewing out colour words.

This is not opinion, by the way.

Respectfully, this is quite literally how Tommy Edison, a person fully blind2 from birth, describes his own understanding and (non-)experience of colour. You can hear that he uses colour words like “blue” (it used to be his favourite colour before purple!) and knows certain facts about which objects are what colour when told — like the fact that his parents told him his room was painted blue at one point.

But that’s about it, as he explains.

He’s never seen, experienced, or perceived colour. The whole concept of colour is a sidenote to his experience of the world (e.g., he does not think “yellow” when someone asks him to think of a banana). He also says it doesn’t help him if you describe one sense (e.g., of colour) with another sense (e.g., of touch).

This is because senses are orthogonal to each other the way any <image> vs any <action> token in an LLM’s multimodal sentence could theoretically be paired with one another. You can touch a sheet of paper, and that paper could be any colour of the rainbow. So knowing what a sheet of paper feels like gives a brain no information on what colour it should predict the sheet of paper could be — until you train it up with data of course (where possible).

This is why I think it does not make sense to subtract 100% of the missing “visual” bits of information from a person’s pretraining data just because one’s eyes don’t work (though a partial subtraction would make sense to me). A blind person’s brain and all its neurons are still there.

And we know that neuroplasticity is a thing.

Neurons without input will not just sit idly, if the brain is young enough. A young brain can and will rewire itself to whatever input it does get, even if it doesn’t happen 100% for all neurons. This happens in cats (see Auditory compensation for early blindness in cat cerebral cortex) and humans (“Visual” Cortex Responds to Spoken Language in Blind Children). So a pretraining bit that might have originally been a “visual information” bit coming from the eye would just now be an “audio information” bit coming from the ears. Or maybe “tactile information” bit from the hands.

The real bottleneck for the information processing of the high level, abstracted versions of a concept is actually attention in humans, not really the capacity of our sensory organs (though it will have an impact as in, you can’t attend to what you don’t have sensors/receptors for). This is why Tommy Edison’s experience of sound in the world is probably much richer than a typical, non-musician, sighted person’s world of sound. Not by magic, but through the regular neural processes that happen when one pays more attention to a different part of their experience of the world.

This also explains, as Tommy Edison wonders, why it’s possible for sighted people to miss something going on right in front of one’s face, or why it’s possible to lose things that might be within one’s visual field. It’s also why e.g. Heather Lawson’s sense of smell started getting stronger when she lost her sense of sight.

In very hand-wavy terms, I think of this as “concept learning tends to expand to the resolution of your effectively-attended-to input data”. Or in a cleaner sentence, "your ability to learn concepts is limited by the level of attention you pay." All of this is to say that the type of information doesn’t affect the size of one’s training data—the thing I was originally trying to estimate—as much as you might initially assume.

Neurons themselves don’t know what they are or what they’re supposed to be doing. It’s not like a neuron would go “Hey, I’m supposed to be a visual neuron. But there isn’t any input from the eyes. I guess I’ll just go ahead and atrophy then, because I refuse to deal with auditory data.”

How would this point apply to a multimodal LLM?

In any case, if you are not used to thinking about brains and minds which might be quite outside your own experience with whatever body or mind you happen to have, starting with LLMs may be starting on hard mode.

Feel free to warmup those empathy muscles with fellow humans first.

We know birds at least are definitely tetrachromats. And other animal species have different numbers of photoreceptors in their eyes. The mantis shrimp famously has 12, though having the 12 dimensions may not mean what you think it means.

Blindness comes in degrees of severity.

"The real bottleneck for the information processing of “concepts” is actually attention in humans, not the capacity of our sensory organs."

This sounds right, but would massively reduce the brain's input capacity versus LLMs. I can't say I've looked into the research, but human attention is typically quoted to be only 40–60 bits/sec.

That said, I think you're right to point out the huge amount of pretraining that must be required for us to even know what to pay attention to. This is the problem of signal detection, and is a function of maturity.

One thing I'm not quite getting though - if we say that 5 year olds have typically been exposed to around 25 million spoken words and LLMs to billions of written words - is why (if we convert to the common currency of bits) that's not a fair comparison?