Are you practicing "EFFECTIVE altruism" or "effective ALTRUISM"?

Effective Altruism is bistable, which is why it can drive extreme actions for both good and bad: from donating organs to committing fraud.

Recently, there’s been a surge in people condemning Effective Altruism (EA) and sparking subsequent defenses. This isn’t particularly new, in that there has always been people who encounter EA the philosophy / message / lifestyle / social movement, and then decide it’s not for them, or call it “a dangerous philosophy” with well-reasoned arguments.

Rather than offer condemnations or defenses one way or another, I’ve been wondering about what it is about EA that makes it so easy to love or hate.

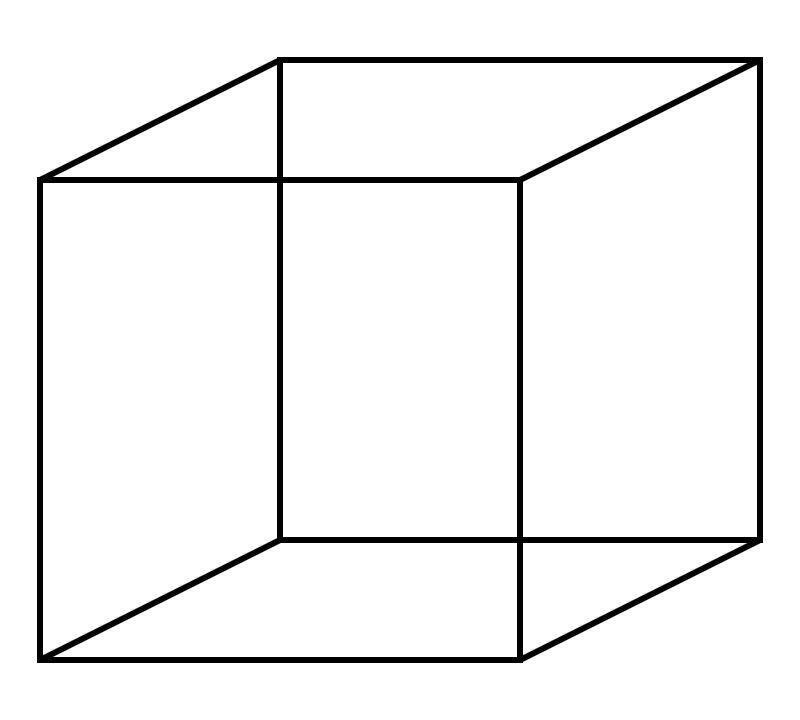

I’ve noticed that when people read “Effective Altruism”, some will hear “EFFECTIVE altruism” while others will hear “effective ALTRUISM”, like the perception of a bistable Necker Cube, or the spinning ballerina.

This makes for rather bimodal outcomes.

[Edit Jul 29th 2024: Which might correlate with what Vitalik Buterin observed as a difference in the “online” version of EA and the “in-person” version of EA.]

Most people in the middle will hear “effective altruism” and think it’s about taking actions that are effective, as in, produce some result (as opposed to things that don’t produce results), and altruistic as in, actions that help other people (as opposed to just yourself). Which all sounds very normal and uncontroversial. These people are probably wondering what all the fuss is about.

If you wanted to create an Effective Altruistic AI, let’s say a relatively simple one that was formulated as a constraint satisfaction problem, would you prefer one that generated actions which were EFFECTIVE subject to altruism as the constraint, or ALTRUISTIC subject to effectiveness as the constraint?

Is there supposed to be a difference? In theory no. In practice, probably yes.

Multi-objective optimization - An analogy

Imagine you are searching for some “best” magic number. For the sake of example, let’s also say you have a magic tablet that tells you whether a number is “better” or “worse” if you type in a number. You just have to generate numbers to check. Go ahead and generate 10 numbers as your first guesses.

…

…

…

…

…

My first guesses were: 0, 1, 2, 7.826, 6 + 2i, 7 + 3i, 24, e, -23, -67.678. Were there any overlap with the numbers you had in mind?

There are a lot of numbers out there, so let’s say a fairy helps us out by telling us the magic number is odd. That helps narrow things down a little (I can remove 2, 24, probably 7.826, 6+2i, and a few others from my guess pool) but there’s still a lot of numbers to guess from.

Then the fairy says the number is less than 20. Now we have an even smaller pool of (still infinite) numbers to guess from. Yay!

The important thing though, is that in theory, it shouldn’t matter that the fairy said the number was odd first, and then less than 20. If it had said the number was less than 20, and then odd, we should still in the end, have roughly the same final set of numbers. In theory, it doesn’t matter that I removed “24” as a guess sooner rather than later, as long as it got removed.

But that’s in the end.

In practice, with finite time, the order in which the fairy tells you the constraints matters if you were generating your guesses sequentially, one-by-one or 100-by-100, in any systematic-ish manner.

If the fairy said “odd” first, then I wouldn’t have to bother with guessing numbers like 2 and 7.826, and might start with -333, -1, 1, 333, 555, 777 etc.

If the fairy said “less than 20” first, I’d probably start guessing from 19.9999999, -18, 16 + i, etc.

With finite time, the numbers I’ve generated from processing the two constraints in different orders (“odd, then less than 20” and “less than 20, then odd”) will give me two different sets of numbers to find the “best” or even just a “better” number, even if I have the exact same tablet with the same function and criteria that defines “better” or “worse” in exactly the same way.

The difference in the generated sets of numbers gets worse the more dissimilar the starting points are for two people. The difference also gets worse the more dissimilar the way two people generate their next guesses. (The tablet that tells you “better” or “worse” helps with making the number guesses become more similar to each other depending on the function it uses, but I’m going to leave that aside since it’s not the main point here).

The flip side is that if two people happen to have similar initial starting points and similar methods of generating subsequent numbers then the overlap will be large, and it will not be noticeably obvious that two people started from different places. For example, if Alice starts at 0, and guesses 2, 3, 4, 5…etc. while Bob starts at -4, and guesses -2, 0, 2, 4, ..etc. for 10 numbers, Alice and Bob will end up having a decent amount of overlapping numbers.

“EFFECTIVE altruism” or “effective ALTRUISM”?

Now imagine someone is trying to consider what to do with their life, and what actions they can take. Maybe the decision is about:

a career to pursue

a charity to donate money to

a cause area to do research in

what financial compliance rules to follow or keep track of

what CEO to have in charge of some organization etc.

This person(‘s brain) needs to generate a set of possible actions they can take, and then search for a “best” next action, and they don’t have infinite time to think about this.

How might you tell the difference between someone who is processing “effective ALTRUISM” (emphasis on altruism) vs “EFFECTIVE altruism” (emphasis on effectiveness)?

Completely speculative, but I wonder if you might be able to tell by looking at the order in which they generate possible action options, the way you could look at what numbers were first generated as guesses in the analogy above? (There’s definitely a fun experimental philosophy / vignette survey style experiment one could conduct here to check this hypothesis, if one were inclined to.)

Maybe for those who hear “effective ALTRUISM”, they start with enumerating action options that are primarily altruistic, like reducing the number of people suffering from X disease or reducing the number of animal suffering, and then filter for actions that are more/less effective ways to doing that. I feel like this kind of approach is the train of thought that leads people to:

start organizations like GiveWell

Write books about “Doing Good Better” (which I parse as “doing good, better” - note the order!)

or any of the other things on this list.

And for those who hear “EFFECTIVE altruism”, they start with enumerating action options that are primarily about being effective in the world, (i.e., can help them effect some change in the world for multiple reasons), like earning money, good will, reputation, career capital etc., and then filter for actions that are more/less altruistic. This kind of approach might lead to ideas like earning to give and 80,000 hours, but also selling your granny, committing fraud like an SBF, or potentially even something more extreme.

Notably, it seems to me that the actions under consideration when someone is coming from mostly an “effective ALTRUISM” point of view are actions where there’s an option to *not* do the thing. It is entirely possible to live a modern human life and *not* donate money to charity, or *not* donate a kidney to anyone.

Whereas actions considered primarily from an “EFFECTIVE altruism” point of view seems to consist of actions that you more or less *have* to do in a normal human life, so the only knobs you can tweak are more/less. You can’t really expect most people to live life on earth in this day and age and *not* earn money, *not* work or have a career, don’t interact with anyone to have *no* reputation, and actively *not* be nice to anyone all the time and earn no one’s good will (being mean is hard!). So if you’re going to be doing those things anyway, you might as well filter for more altruistic options amongst the set available to you.

In theory, aiming to be EFFECTIVE subject to altruism as the constraint, or aiming to be ALTRUISTIC subject to effectiveness as the constraint should give you an equivalent set of action options to consider “in the end”, just like with the numbers analogy.

But in practice, no one has infinite time.

This happens even if two people have the exact same criteria for what is “better” or “worse” for the world, which is already an unrealistic best case scenario.

Also, remember how in the number guessing analogy, people with similar initial starting points and guessing strategies will have more overlap in their set of guessing numbers? And the further apart the initial starting point and the more dissimilar the method subsequent guesses are generated, the more dissimilar the set of numbers will end up?

Well, an effective ALTRUIST might encounter an EFFECTIVE altruist, and think the EFFECTIVE altruist who is noticeably emphasizing EFFECTIVE more than average is considering actions that are not altruistic enough and maximising too hard. An EFFECTIVE altruist might encounter an effective ALTRUIST, and think the effective ALTRUIST who is noticeably emphasizing altruism more than average is not maximising enough - i.e., not considering actions that are effective enough (e.g., Freddie DeBoer thinks it’s ludicrous that he’s hearing EA debates on termite sentience, or that EA led at least one person to spend resources considering whether any animal that consumes other animals should be allowed to exist.). In either case, the people who are noticeably too much one way or another will be people who:

Started from far away initial points (relative to others).

Have a sparser/denser way to generate their set of potential actions (relative to others).

Have spent a lot longer thinking / generating potential actions further away in the “space” of potential actions (relative to others)

So then one sub-group gets mad at the other, saying how “effective ALTRUISM” isn’t really true “Effective Altruism” because some portion of EAs spend time doing un-effective useless things like thinking about termite suffering or vice versa, how true “Effective Altruism” is “EFFECTIVE altruism” and that’s dangerous when pushed to the extremes like in the case of SBF and FTX, etc.

By the way, I don’t mean to imply deliberate intent if your mind starts from either effectiveness or altruism, any more than I would attribute deliberate intent to someone giving an initial guess of 2894567 versus -0.2 in the “find the best number” analogy above either. Or a deliberate intent to see the spinning ballerina spin one way or the other at first.

I also don’t mean to imply that a single person(‘s mind) only ever operates in one mode or the other, the same way the same person can spontaneously (and indirectly willfully, if you know how) perceive both sides of a bistable image. The important thing to remember is, images like the Necker cube and the spinning ballerina are confusing to the brain because these specific, un-naturalistic images or videos, lack the contextual cues that your visual perceptual system would usually have when looking at real objects in the real world. I can imagine the same thing might be happening if someone learns about effective altruism from just one book, one person, a few small tweets, or even a few news articles here and there. If you don’t have the semantic equivalent of contextual cues to put things into perspective when you try to apply concepts learned in cleaner, less complicated thought experiments like a child drowning in a pool to a more complex scenario like the real world, then you might get stuck trying to answer an unanswerable question, like, “is the ballerina really spinning left or right” or “is the ballerina supposed to be spinning left or right”?

(Hint: the video is a synthetic 2D video, specifically created to induce an illusion. There does not exist an “original” or “actual” ballerina spinning in the 3D world.)

Finally, I’m not sure making an AI could help us with the general problem of having more than one optimisation objective, without someone already solving that exact problem to make the AI. For example, making a slightly more complicated EA A.I., like a constrained RL agent that generates actions which are ALTRUISTIC subjective to effectiveness as a constraint, is probably what I’d prefer to have. But things kind of get loopy and circular when there are multiple objectives which are dependent and loop back on one another, which is unlike the “guess the number” analogy (i.e., a number being odd has no effect on whether the number is less than 20).

If scores for effectiveness can loop back to boosting what’s considered more/less altruistic, and scores for altruism can be modulated by how effective an action is…I haven’t seen anything that can deal with this type of problem yet. If there is something out there you know of, please point me to it!

(Edit: I found this interesting paper which hypothesizes that maybe brains doing circular inference is actually what causes bistable perception. Which is unfortunate because I think the novelty of the EA message is that you should let effectiveness influence your consideration of altruistic actions to take, and let actions that have a higher “effectiveness” score increase that action’s “altruism” score and vice versa. If bistability is the price for trying to do this circular inference … well perception works fine in the complex real world 99% of the time. The remaining 1% is when you find edge case stimuli like the Necker cube.

So I think most of what actions are considered “good EA” would probably be fine and consistent amongst most people, most of the time. The remaining 1% of the time you might get complaints about “but SBF!” or “why are we wasting resources on termites?”)

It’s too bad there are multiple actions you could take to be effective in the world which aren’t altruistic, and multiple actions you could take to be altruistic in the world which aren’t effective. As it turns out, Effectiveness and Altruism are probably orthogonal.

[Edit: Jul 29th 2024] And so in the EA and AI safety space, we get this wonderful Spinning Ballerina:

It’s interesting, you just said, “the kind of pausing AI that effective altruists are in favour of.” The crazy thing is that people who are influenced by effective altruism, or have been involved in the kind of the social scene in the past, are definitely at the forefront of groups like Pause AI who want to just basically say, the simple message is we need to pause this so that we can buy time to make it safe. They’re also involved in the companies that are building AI and in many ways have been criticised a lot for potentially pushing forward capabilities enormously. It is a very bizarre situation that a particular philosophy has led people to take seemingly almost diametrically opposed actions in some ways. And I understand that people are completely bemused and confused about that. (Vitalik Buterin on 80k podcast, emphasis mine)